How to Build an AI-Powered Knowledge Base Using Notion and LLMs

AI-powered knowledge base systems are designed to solve a problem most teams quietly struggle with: information grows faster than it can be maintained. Notes pile up, documentation becomes outdated, and search turns into guesswork. Over time, people stop trusting the system and return to scattered files, chats, and personal notes.

An AI-powered knowledge base solves a different problem. Instead of focusing only on storage, it focuses on retrieval, structure, and continuous relevance. By combining Notion with large language models (LLMs), you can build a system that not only stores information but actively helps you use it.

This guide explains how to design such a system step by step, without turning it into a fragile automation project.

What “AI-Powered” Really Means in a Knowledge Base

An AI-powered knowledge base is not just a database with a chat interface.

In practice, it means:

- Information is stored in a consistent structure

- AI understands context across documents

- Retrieval is intent-based, not keyword-based

- Content evolves as new information is added

The goal is not to replace documentation, but to reduce the effort required to maintain and query it.

Step 1: Design the Knowledge Structure Before Adding AI

AI amplifies structure. It does not fix chaos.

Before connecting LLMs, define a clear schema inside Notion:

- Core databases (Projects, Processes, Concepts, References)

- Standardized fields (status, owner, last updated, source)

- Clear relationships between databases

Each page should answer one question well. Overloaded pages confuse both humans and AI.

This step determines whether your AI layer will be helpful or misleading.

Step 2: Normalize Input Formats

LLMs work best with predictable inputs.

Define simple rules:

- One idea per page

- Clear headings

- Consistent terminology

- Explicit summaries at the top of long documents

For example, every process page might start with:

- What this is

- When it applies

- Key steps

- Known limitations

This consistency allows AI to generate accurate summaries and answers later.

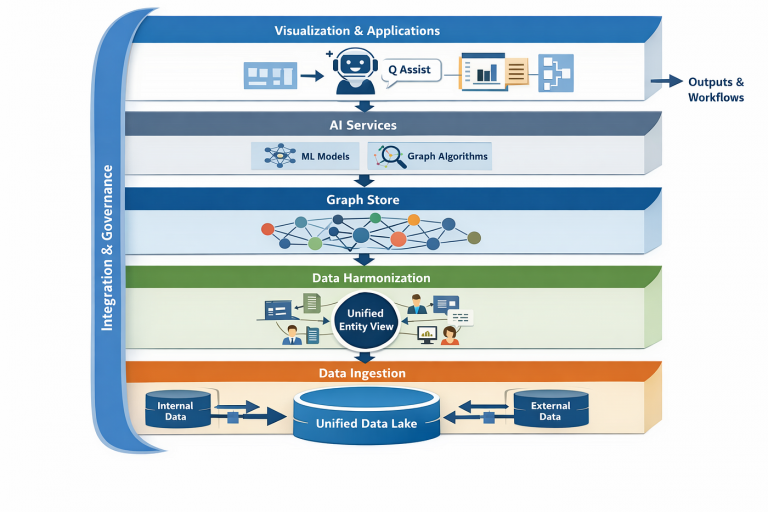

Step 3: Connect LLMs for Contextual Retrieval

Once the structure is stable, AI can be introduced as a retrieval layer.

Typical use cases include:

- Asking questions across multiple databases

- Generating summaries from linked pages

- Explaining processes in plain language

- Surfacing related documents automatically

The key difference from search is intent. Instead of matching words, LLMs infer what the user is trying to achieve.

This is where a knowledge base becomes usable under pressure.

Step 4: Automate Knowledge Maintenance

The most overlooked benefit of AI is maintenance.

LLMs can help:

- Detect outdated pages

- Suggest missing links

- Highlight contradictions

- Generate update prompts when source data changes

For example, when a process is updated, AI can flag dependent documents that may need revision. This keeps the system alive without constant manual audits.

Maintenance becomes reactive instead of scheduled.

Step 5: Add Human-in-the-Loop Controls

Blind automation erodes trust.

Effective systems always include:

- Manual approval for content updates

- Clear version history

- Visible sources for AI-generated answers

AI should assist, not overwrite. Humans remain responsible for truth and accuracy.

This balance ensures the knowledge base stays reliable over time.

Step 6: Build Retrieval Workflows, Not Just a Chat Interface

Many teams stop at “ask the knowledge base.”

Better systems integrate AI retrieval into workflows:

- Project kickoffs pull relevant documentation automatically

- New hires receive contextual summaries

- Decision reviews include historical context

Knowledge becomes embedded, not consulted.

This is where real efficiency gains appear.

Common Mistakes to Avoid

- Treating AI as a shortcut instead of a layer

- Feeding unstructured notes directly into LLMs

- Automating updates without review

- Ignoring ownership and accountability

- Expecting AI to fix poor documentation habits

AI makes good systems better and bad systems louder.

When This Approach Makes Sense

An AI-powered knowledge base is most valuable when:

- Information changes frequently

- Multiple people rely on shared context

- Decisions depend on historical knowledge

- Manual documentation upkeep is already failing

If your knowledge base is static and rarely queried, AI adds little value.

Final Thoughts

Building an AI-powered knowledge base is not a tooling project. It is a systems design problem.

Notion provides the structure. LLMs provide the reasoning layer. The value emerges only when both are aligned around clear workflows and responsibilities.

When done right, the knowledge base stops being a repository and starts functioning as an operational memory. That shift is what turns documentation from a burden into an asset.